■環境構築

Raspbianの最新版(Raspbian Stretch with desktop and

recommended software)をダウンロードして32GByteのマイクロSDに焼いて

RaspberryPi3にセットして起動、初期セットアップを完了。

まっさらの状態から以下のコマンドを入れていきます。

pip3 install matplotlib

sudo apt install libatlas-base-dev

sudo pip3 install opencv-python

sudo pip3 install opencv-contrib-python

sudo apt-get update

sudo apt-get install libhdf5-dev

sudo apt-get update

sudo apt-get install libhdf5-serial-dev

sudo apt-get install libjasper-dev

sudo apt-get install libqt4-test

sudo pip3 install chainer

sudo pip3 install chainercv

■LogitecのWebカメラの画像をリアルタイム表示

ラズパイカメラからのリアルタイム入力できると良いなーと思っておりましたが

cv2.VideoCapture(0)ではラズパイカメラをそのまま認識できない様なので

LogitecのWebカメラから画像を入力します。

パイカメラからの入力はプログラムを修正すれば行けると思うので後日。

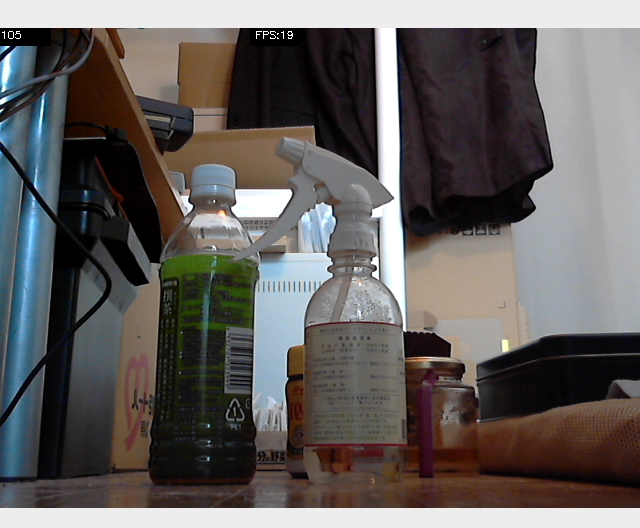

■Webカメラの画像をリアルタイム表示実行

python3 Test_Cam.py 0

■実行結果

19FPSで表示できております。

パソコンでも30FPSぐらいでしたのでラズパイなかなかやるじゃんという感じです。

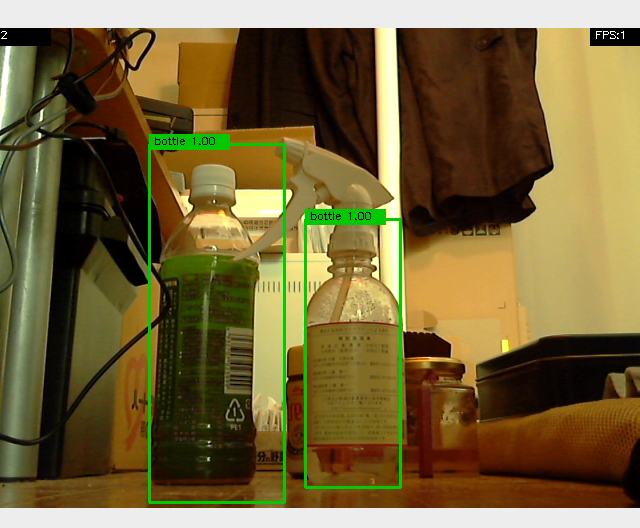

■実行

python3 yolo3.py 0

■実行結果

1画像につき55秒かかっております。

さすがにラズパイではキツイか?とも思いますが

ラズパイ用のGPUってあるんでしょうかね?

時々生き物や物体の数を数える程度であればなんとか使えるでしょう。

ちなみに、ラズパイでの実行ではCPU食い過ぎの為なのかもしれませんが

「強制終了」のメッセージとともに中断することがあるようです。

■ソース

ソースは昨日まで作っていたものと同じです。

pythonで作るとWindowsでもラズパイでもそのまま動いて便利ですね。

・Test_Cam.py

import argparse

import cv2

from timeit import default_timer as timer

def main():

parser = argparse.ArgumentParser()

parser.add_argument('video')

args = parser.parse_args()

if args.video == "0":

vid = cv2.VideoCapture(0)

else:

vid = cv2.VideoCapture(args.video)

if not vid.isOpened():

raise ImportError("Couldn't open video file or webcam.")

# Compute aspect ratio of video

vidw = vid.get(cv2.CAP_PROP_FRAME_WIDTH)

vidh = vid.get(cv2.CAP_PROP_FRAME_HEIGHT)

vidar = vidw / vidh

print(vidw)

print(vidh)

accum_time = 0

curr_fps = 0

fps = "FPS: ??"

prev_time = timer()

frame_count = 1

while True:

ret, frame = vid.read()

if ret == False:

print("Done!")

return

# Resized

im_size = (640, 480)

resized = cv2.resize(frame, im_size)

# =================================

# Image Preprocessing

# =================================

# =================================

# Main Processing

result = resized.copy() # dummy

# result = frame.copy() # no resize

# =================================

# Calculate FPS

curr_time = timer()

exec_time = curr_time - prev_time

prev_time = curr_time

accum_time = accum_time + exec_time

curr_fps = curr_fps + 1

if accum_time > 1:

accum_time = accum_time - 1

fps = "FPS:" + str(curr_fps)

curr_fps = 0

# Draw FPS in top right corner

cv2.rectangle(result, (250, 0), (300, 17), (0, 0, 0), -1)

cv2.putText(result, fps, (255, 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.35, (255, 255, 255), 1)

# Draw Frame Number

cv2.rectangle(result, (0, 0), (50, 17), (0, 0, 0), -1)

cv2.putText(result, str(frame_count), (0, 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.35, (255, 255, 255), 1)

# Output Result

cv2.imshow("Result", result)

# Stop Processing

if cv2.waitKey(1) & 0xFF == ord('q'):

break

frame_count += 1

if __name__ == '__main__':

main()

・yolo3.py

import time

import argparse

import matplotlib.pyplot as plt

import cv2

import numpy as np

from timeit import default_timer as timer

import chainer

from chainercv.datasets import voc_bbox_label_names

from chainercv.datasets import coco_bbox_label_names

from chainercv.links import YOLOv3

#色のテーブル 150まで対応

label_colors = (

(120, 120, 120),

(180, 120, 120),

(6, 230, 230),

(80, 50, 50),

(4, 200, 3),

(120, 120, 80),

(140, 140, 140),

(204, 5, 255),

(230, 230, 230),

(4, 250, 7),

(224, 5, 255),

(235, 255, 7),

(150, 5, 61),

(120, 120, 70),

(8, 255, 51),

(255, 6, 82),

(143, 255, 140),

(204, 255, 4),

(255, 51, 7),

(204, 70, 3),

(0, 102, 200),

(61, 230, 250),

(255, 6, 51),

(11, 102, 255),

(255, 7, 71),

(255, 9, 224),

(9, 7, 230),

(220, 220, 220),

(255, 9, 92),

(112, 9, 255),

(8, 255, 214),

(7, 255, 224),

(255, 184, 6),

(10, 255, 71),

(255, 41, 10),

(7, 255, 255),

(224, 255, 8),

(102, 8, 255),

(255, 61, 6),

(255, 194, 7),

(255, 122, 8),

(0, 255, 20),

(255, 8, 41),

(255, 5, 153),

(6, 51, 255),

(235, 12, 255),

(160, 150, 20),

(0, 163, 255),

(140, 140, 140),

(250, 10, 15),

(20, 255, 0),

(31, 255, 0),

(255, 31, 0),

(255, 224, 0),

(153, 255, 0),

(0, 0, 255),

(255, 71, 0),

(0, 235, 255),

(0, 173, 255),

(31, 0, 255),

(11, 200, 200),

(255, 82, 0),

(0, 255, 245),

(0, 61, 255),

(0, 255, 112),

(0, 255, 133),

(255, 0, 0),

(255, 163, 0),

(255, 102, 0),

(194, 255, 0),

(0, 143, 255),

(51, 255, 0),

(0, 82, 255),

(0, 255, 41),

(0, 255, 173),

(10, 0, 255),

(173, 255, 0),

(0, 255, 153),

(255, 92, 0),

(255, 0, 255),

(255, 0, 245),

(255, 0, 102),

(255, 173, 0),

(255, 0, 20),

(255, 184, 184),

(0, 31, 255),

(0, 255, 61),

(0, 71, 255),

(255, 0, 204),

(0, 255, 194),

(0, 255, 82),

(0, 10, 255),

(0, 112, 255),

(51, 0, 255),

(0, 194, 255),

(0, 122, 255),

(0, 255, 163),

(255, 153, 0),

(0, 255, 10),

(255, 112, 0),

(143, 255, 0),

(82, 0, 255),

(163, 255, 0),

(255, 235, 0),

(8, 184, 170),

(133, 0, 255),

(0, 255, 92),

(184, 0, 255),

(255, 0, 31),

(0, 184, 255),

(0, 214, 255),

(255, 0, 112),

(92, 255, 0),

(0, 224, 255),

(112, 224, 255),

(70, 184, 160),

(163, 0, 255),

(153, 0, 255),

(71, 255, 0),

(255, 0, 163),

(255, 204, 0),

(255, 0, 143),

(0, 255, 235),

(133, 255, 0),

(255, 0, 235),

(245, 0, 255),

(255, 0, 122),

(255, 245, 0),

(10, 190, 212),

(214, 255, 0),

(0, 204, 255),

(20, 0, 255),

(255, 255, 0),

(0, 153, 255),

(0, 41, 255),

(0, 255, 204),

(41, 0, 255),

(41, 255, 0),

(173, 0, 255),

(0, 245, 255),

(71, 0, 255),

(122, 0, 255),

(0, 255, 184),

(0, 92, 255),

(184, 255, 0),

(0, 133, 255),

(255, 214, 0),

(25, 194, 194),

(102, 255, 0),

(92, 0, 255),

(0, 0, 0)

)

def main():

parser = argparse.ArgumentParser()

parser.add_argument('--gpu', type=int, default=-1)

parser.add_argument('--pretrained-model', default='voc0712')

parser.add_argument('--class_num', default=20)

parser.add_argument('--class_list', default=0)

parser.add_argument('video')

args = parser.parse_args()

#

#パラメータ解析

# python yolo3.py 0

# デフォルトではvoc0712のモデルをダウンロードして来ます。認識できるのは20種類

#

# python yolo3.py --pretrained-model yolov3.weights.npz --class_num 80 --class_list yolov3.list 0

# --pretrained-model

# darknet2npz.pyで変換した学習済みモデルyolov3.weights.npzを指定

# --class_num

# 上記学習済みモデルのクラス数

# --class_list

# 上記学習済みモデルのクラス名一覧 1行に1クラス

# ビデオ

# WEBカメラの場合は0

# 動画ファイルの場合はファイル名

#

if args.pretrained_model=='voc0712' :

label_names = voc_bbox_label_names

model = YOLOv3(20, 'voc0712')

else :

print(args.class_list)

if args.class_list==0 :

label_names = coco_bbox_label_names

else:

f = open(args.class_list, "r")

name_list = []

for line in f:

line = line.strip()

name_list.append(line)

f.close()

label_names = name_list

model = YOLOv3(n_fg_class=int(args.class_num), pretrained_model=args.pretrained_model)

#GPU対応

# CPUなら省略

# GPUなら0

if args.gpu >= 0:

chainer.cuda.get_device_from_id(args.gpu).use()

model.to_gpu()

#

#対応しているクラス名一覧を表示する

#

for name in label_names:

print(name)

#

#WEBカメラまたは動画ファイルを開く

#

if args.video == "0":

vid = cv2.VideoCapture(0)

else:

vid = cv2.VideoCapture(args.video)

if not vid.isOpened():

raise ImportError("Couldn't open video file or webcam.")

# Compute aspect ratio of video

vidw = vid.get(cv2.CAP_PROP_FRAME_WIDTH)

vidh = vid.get(cv2.CAP_PROP_FRAME_HEIGHT)

# vidar = vidw / vidh

print(vidw)

print(vidh)

accum_time = 0

curr_fps = 0

fps = "FPS: ??"

prev_time = timer()

frame_count = 1

while True:

ret, frame = vid.read()

if ret == False:

time.sleep(5)

print("Done!")

return

# BGR -> RGB

rgb = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)

# Result image

result = frame.copy()

# (H, W, C) -> (C, H, W)

img = np.asarray(rgb, dtype = np.float32).transpose((2, 0, 1))

# Object Detection

bboxes, labels, scores = model.predict([img])

bbox, label, score = bboxes[0], labels[0], scores[0]

print("----------")

nPerson = 0

nBottle = 0

if len(bbox) != 0:

for i, bb in enumerate(bbox):

# print(i)

lb = label[i]

conf = score[i].tolist()

ymin = int(bb[0])

xmin = int(bb[1])

ymax = int(bb[2])

xmax = int(bb[3])

class_num = int(lb)

# Draw box 1

cv2.rectangle(result, (xmin, ymin), (xmax, ymax),

label_colors[class_num], 2)

# Draw box 2

# cv2.rectangle(result, (xmin, ymin), (xmax, ymax), (0,255,0), 2)

#text = label_names[class_num] + " " + ('%.2f' % conf)

text = label_names[class_num] + " " + ('%.2f' % conf)

print(text)

if(label_names[class_num] == 'person'):

nPerson = nPerson + 1

if(label_names[class_num] == 'bottle'):

nBottle = nBottle + 1

text_top = (xmin, ymin - 10)

text_bot = (xmin + 80, ymin + 5)

text_pos = (xmin + 5, ymin)

# Draw label 1

cv2.rectangle(result, text_top, text_bot,

label_colors[class_num], -1)

cv2.putText(result, text, text_pos,

cv2.FONT_HERSHEY_SIMPLEX, 0.35, (0, 0, 0), 1)

# Draw label 2

# cv2.rectangle(result, text_top, text_bot, (255,255,255), -1)

# cv2.putText(result, text, text_pos,

# cv2.FONT_HERSHEY_SIMPLEX, 0.35, (0, 0, 0), 1)

print("==========")

print("Number of people : " + str(nPerson))

print("Number of bottle : " + str(nBottle))

# Calculate FPS

curr_time = timer()

exec_time = curr_time - prev_time

prev_time = curr_time

accum_time = accum_time + exec_time

curr_fps = curr_fps + 1

if accum_time > 1:

accum_time = accum_time - 1

fps = "FPS:" + str(curr_fps)

curr_fps = 0

# Draw FPS in top right corner

cv2.rectangle(result, (590, 0), (640, 17), (0, 0, 0), -1)

cv2.putText(result, fps, (595, 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.35, (255, 255, 255), 1)

# Draw Frame Number

cv2.rectangle(result, (0, 0), (50, 17), (0, 0, 0), -1)

cv2.putText(result, str(frame_count), (0, 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.35, (255, 255, 255), 1)

# Output Result

cv2.imshow("Yolo Result", result)

# Stop Processing

if cv2.waitKey(1) & 0xFF == ord('q'):

break

frame_count += 1

if __name__ == '__main__':

main()